Ishaan AnsariI am a Software Engineer L2 at Think Future Technologies Pvt. Ltd, part of the machine learning team, Where I work at the intersection of computer vision and language modelling. Before this, I graduated with a Bachelor's degree in Computer Science and Engineering with Honors from Jamia Hamdard University, Delhi in 2023. As an undergrad I worked in machine learning with a focus on computer vision in healthcare under the supervision of Anam Saiyeda. My eventual goal is to help build AI that can reliably and autonomously perform complex tasks for extremely long periods of time, without human intervention. Read below to learn more about my research interests and past work.Email / GitHub / Twitter / LinkedIn / Curriculum Vitae |

|

|

I work on problems related to interpretability, with a focus on large language models and multimodal systems. |

|

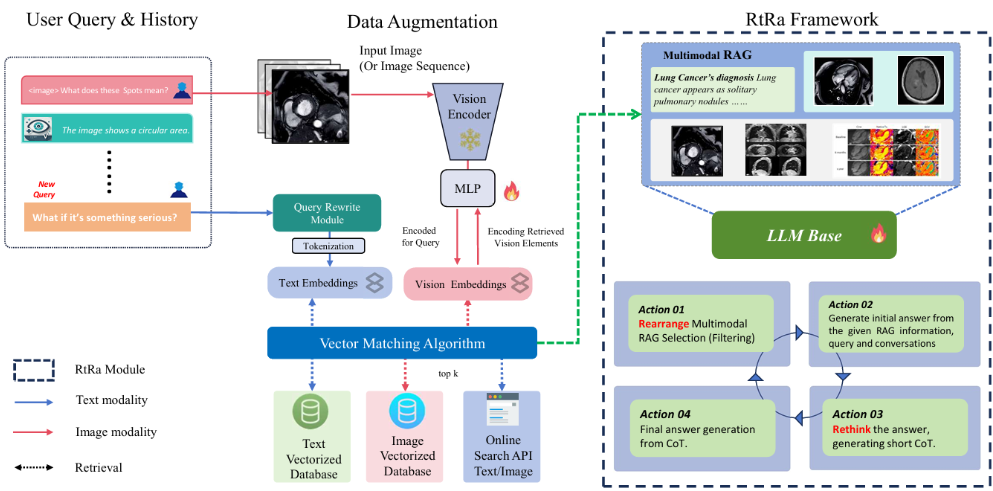

MIRAGEcode / Multimodal RAG framework that integrates visual embeddings from medical images with retrieved clinical knowledge, leveraging dynamic prompt control to enhance factual precision and interpretability in medical reasoning tasks. |

|

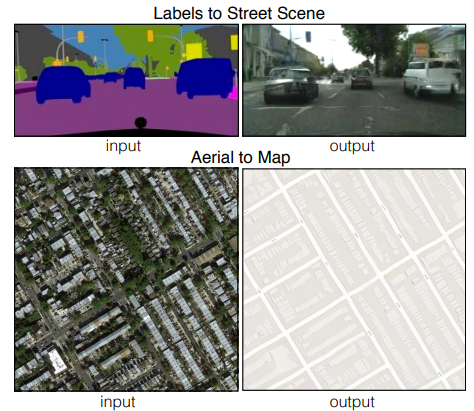

GeoMorphcode / Implemented a Pix2Pix GAN for mapping satellite/aerial images to it equivalent Map-View image |

|

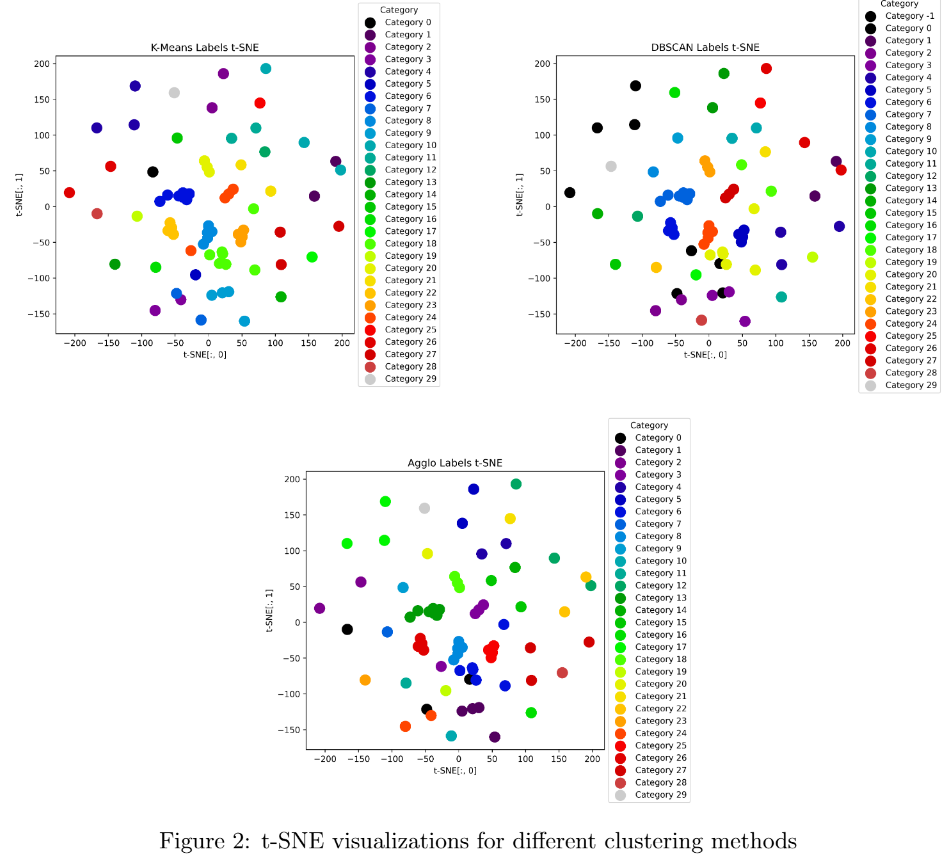

Text guided image clusteringcode / Compared image clustering using visual, text-guided, and fine-tuned deep learning features on Food-101 data subset. |

|

These include experiments, prototypes, or learning-oriented work. |

|

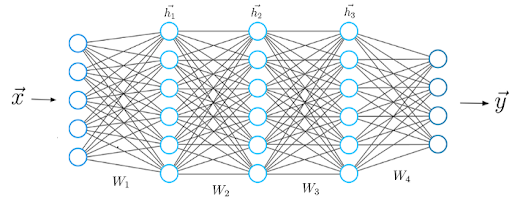

History of Deep Learningcode / It’s an ongoing project where I implement fundamental deep learning architectures from scratch. |

|

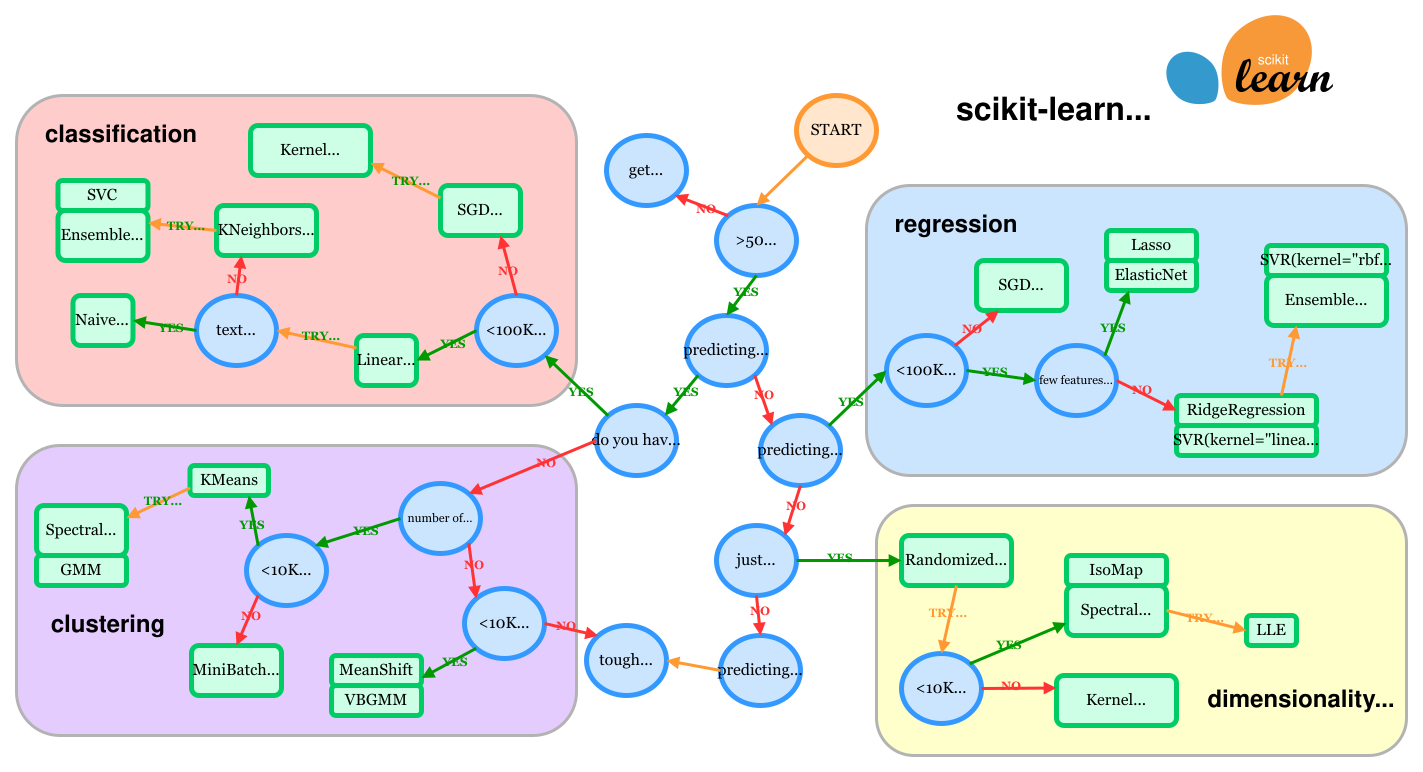

Machine Learning algorithmscode / This repository contains implementations of classical machine learning algorithms from scratch. |

|

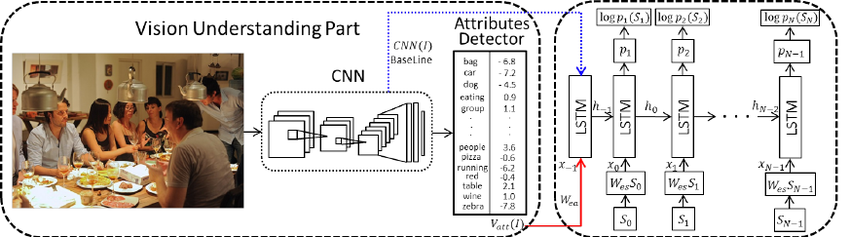

Captionixcode / Image caption generator using CNN, LSTM & Attention mechanism to recognize the context of an image and describe them in natural language. |

|

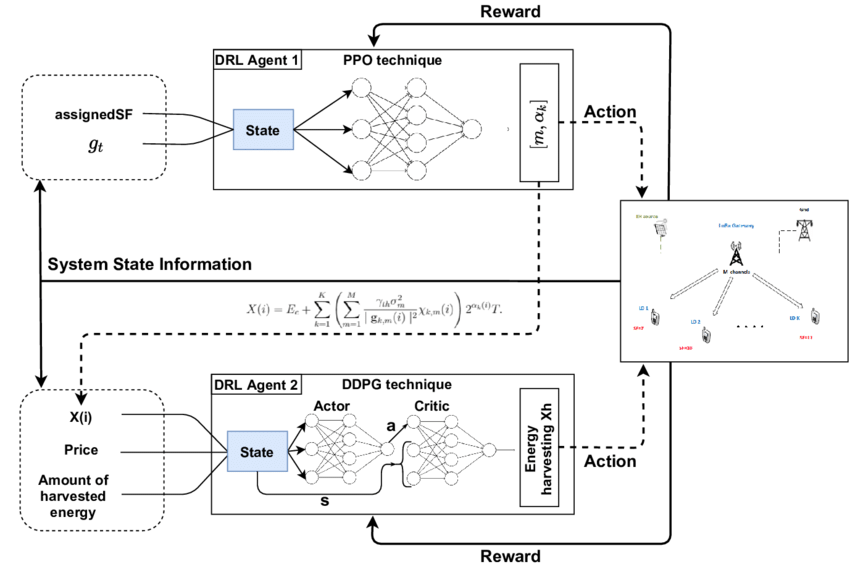

Reinforcement Learningcode / This repository contains various implementations of deep reinforcement learning algorithms accross different environments. |

|

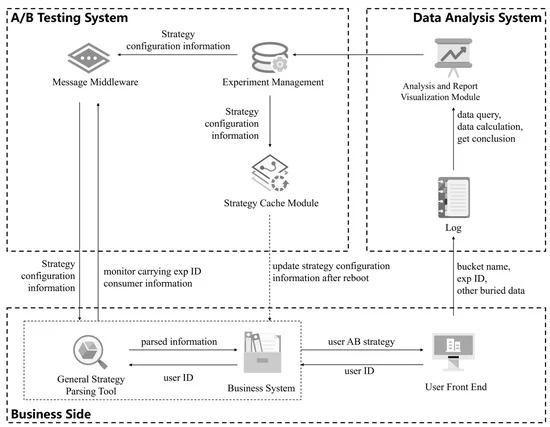

AB Testingcode / In this A/B test, We split the audience in half: the control group gets a Facebook campaign with ‘maximum bidding’ and the test group gets one with ‘average bidding’. |

|

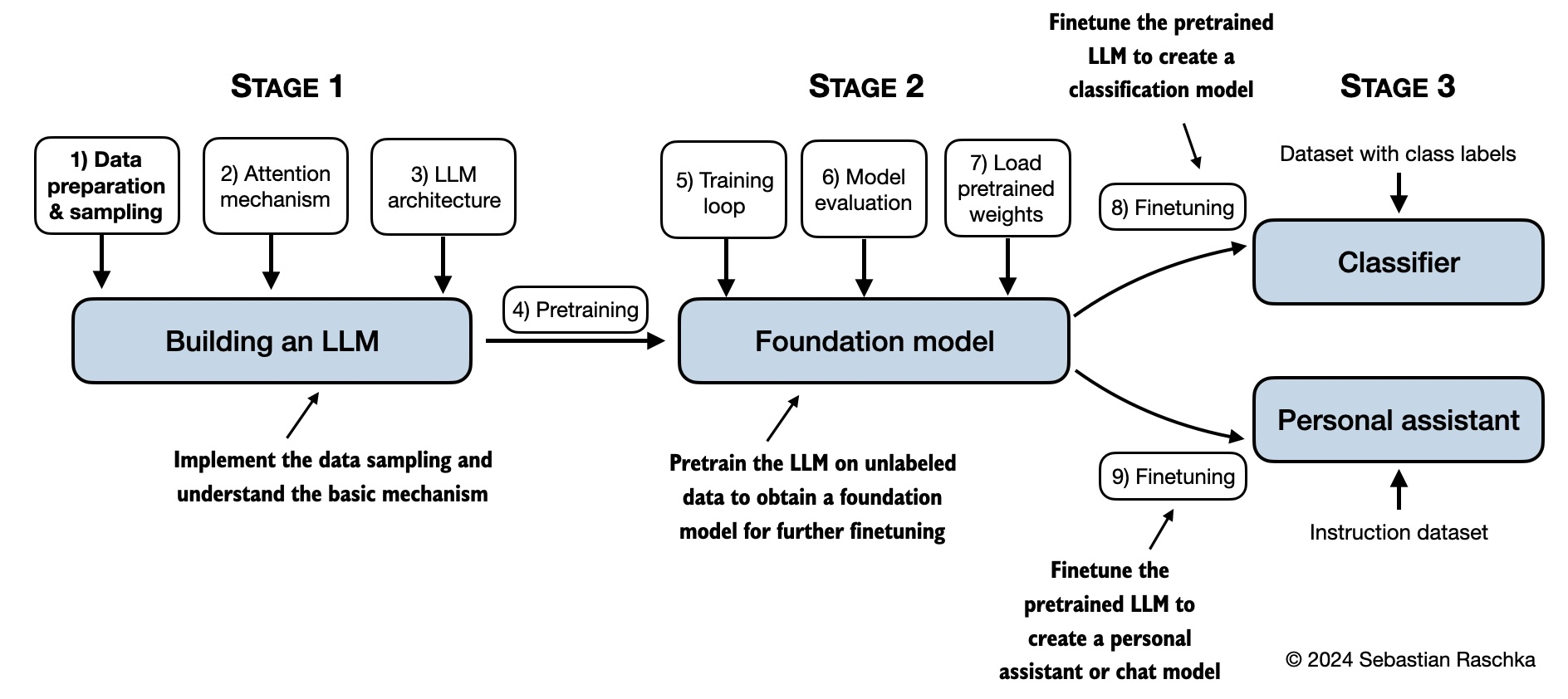

LLMs from scratchcode / A step-by-step implementation of a Large Language Model (LLM) from scratch, covering data preparation, model building, pretraining, and fine-tuning. |

|

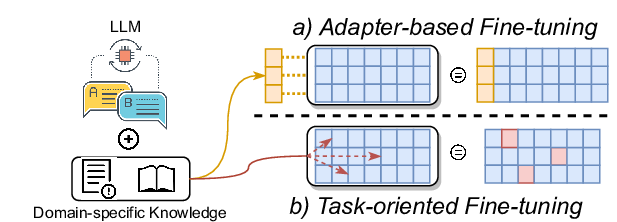

Fine tuning LLMscode / A practical guide to fine-tuning various open-source LLMs such as LLaMA 2, Mistral, etc., using efficient techniques like LoRA and Quantization |

|

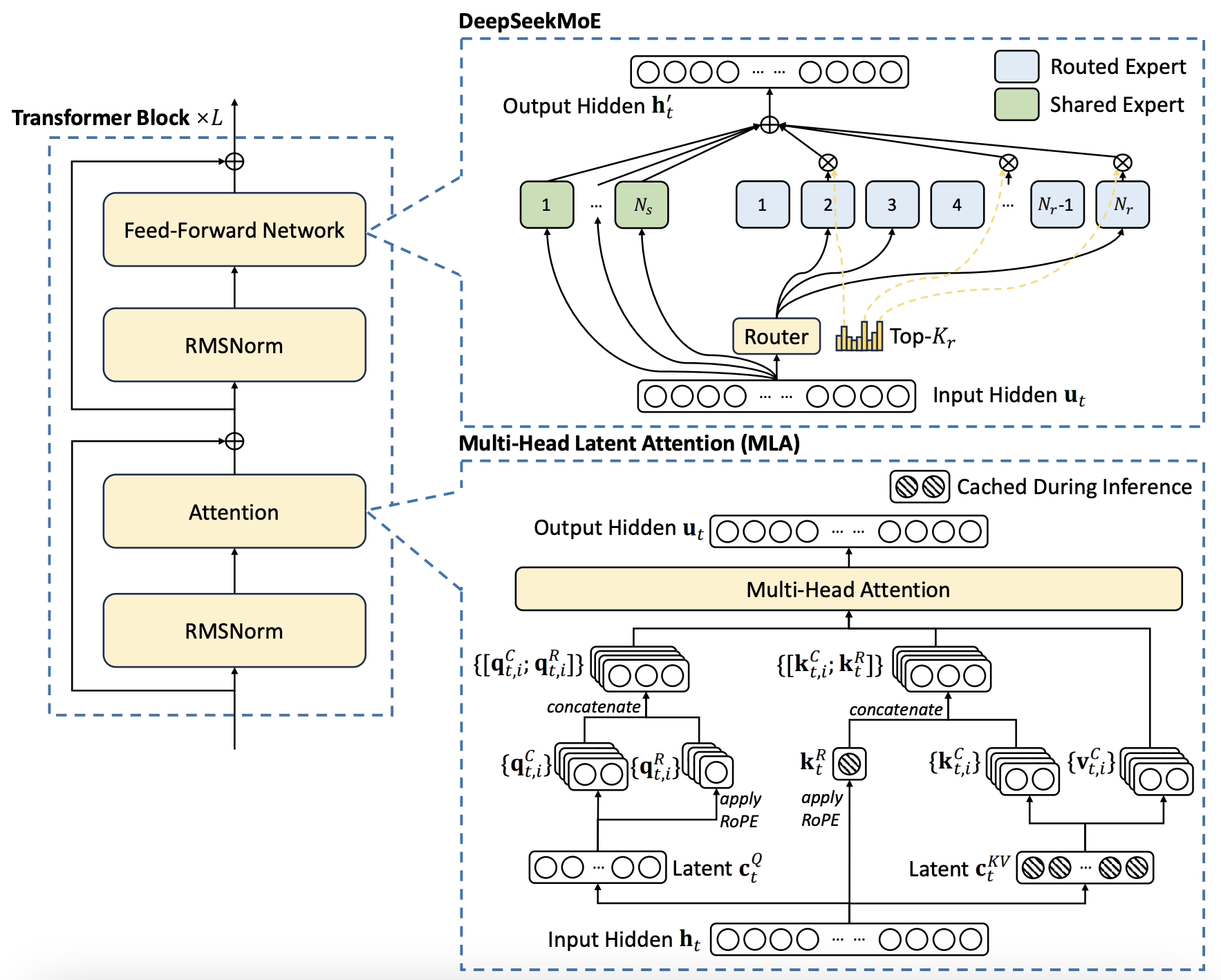

DeepSeek from scratchcode / DeepSeek V3 introduces architectural improvements over traditional transformers which includes, Multi-Head Latent Attention (MLA), Mixture of Experts (MoE), & Multi-Token Prediction (MTP). |

|

I like sharing my thoughts on a variety of topics on my Substack blog. Below are all my blog posts. |

|

February 01, 2026

imagine this: you are working on a critical system that directly impacts user experience, and one tiny dataset tweak that flips behaviour. what would you do? Let's explore some actionable checks to bolt into your evaluation pipeline tonight.

Read on Substack →

|

I am actively mentoring undergraduate and master’s students in LLMs and Vision, and I look forward to supporting more learners. I especially encourage students with diverse backgrounds to connect and explore opportunities for growth and development. If you are interested, send an introductory email that includes:

- A brief introduction about yourself.

- Your academic background and areas of interest.

- Your CV (optional but preferred).

|

This site adapts design elements from Jon Barron's website |